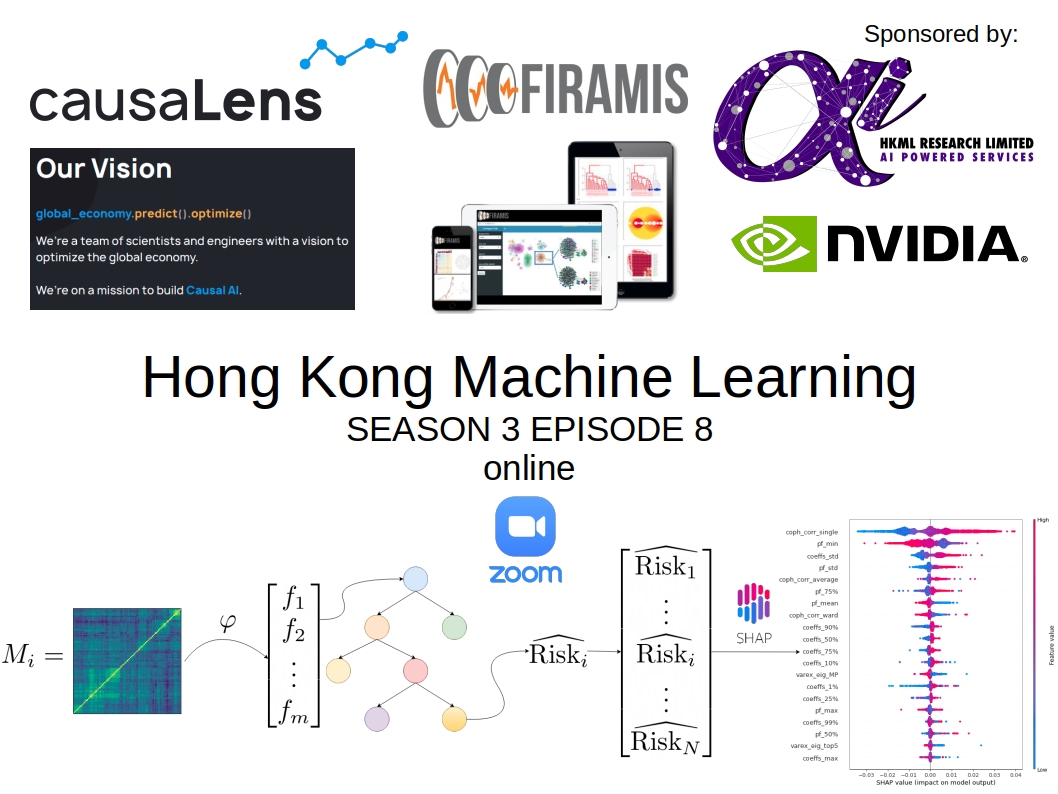

Hong Kong Machine Learning Season 3 Episode 8

17.02.2021 - Hong Kong Machine Learning - ~3 Minutes

When?

- Wednesday, February 17, 2021 from 7:00 PM to 9:00 PM (Hong Kong Time)

Where?

- At your home, on zoom. All meetups will be online as long as this COVID-19 crisis is not over.

Programme:

Talk 1 (Chaired by Gautier Marti): Explainable, accelerated machine intelligence in finance and insurance

Jochen Papenbrock, NVIDIA https://www.nvidia.com/en-us/industries/finance/

Abstract:

How can we build Explainable, Trustworthy, and Responsible AI for the Financial Service Industry?

In this presentation, Dr Jochen Papenbrock will talk about the importance of explainable models as opposed to using purely black box models, when working in financial services, both from an operational and regulatory viewpoint.

He will also discuss the benefits of accelerating financial data science to increase responsiveness and robustness of models and corresponding applications in finance and insurance. He will demonstrate latest developments in accelerated end-to-end data science, Python APIs and democratizing rapid AI development and deployment. Use cases in credit risk, asset management and SupTech will be discussed.

Jochen will also report about his ecosystem activities regarding AI in financial services, covering an EU Horizon2020 project, the Frankfurt Institute of Risk Management and Regulation, the Frontiers Journal ‘AI in Finance’, GAIA-X, and the Frankfurt Digital Finance conference.

Talk 2 (Chaired by Gautier Marti): Why Causal AI is the Next Step Towards True AI

Max is the CTO of causaLens. He began his career at a hedge fund company and then worked as a consultant and CTO. Max holds a PHD in Physics from the University of Illinois Urbana-Champaign.

Abstract:

Advances in artificial intelligence and machine learning in recent years have produced remarkable results in a wide variety of fields. Image recognition and computer vision have demonstrated great potential in their ability to detect disease, enable self-driving capabilities and produce realistic deep fakes videos, among many others. Other significant successes in the field of machine learning include the defeat of the champions of intellectual games such as jeopardy, chess and go; as well as advances in promising fields like protein folding.

These impressive advancements have been primarily driven by increases in computational power and deep learning. However, scaling this approach further will not lead to the development of general AI. In fact, estimates show that our brain operates at 20 W (similar to a low power lightbulb), while training a typical deep learning model produces more CO2 than a car. A fundamental shift in the direction of AI research is required to develop truly intelligent machines that can understand their environment and adapt to reach the goals they are designed to achieve.

Machine learning approaches used nowadays are unable to identify the true causal drivers behind the variations in sales, revenues, stock prices or real estate yields. In current common practice, predictive models are, in essence, curve fitting exercises that do not even attempt to identify cause and effect. As a consequence, models are driven by parameters that happened to correlate in training but do not have true predictive power when deployed in the real world. Correctly identifying causality is the key to overcoming this challenge, producing models that can forecast the future accurately and rapidly adapt to changing market conditions.

At causaLens we believe that a new theory of how to build intelligent machines is required. We believe that machines need to be capable of understanding “cause” and “effect” in order to advance machine learning and bring us one step closer to general AI.

Video Recording of the HKML Meetup on YouTube

- YouTube videos:

HKML S3E8 - ‘Why Causal AI is the Next Step Towards True AI’ by Maksim Sipos, PhD

Thanks to our patrons for supporting the meetup!

Check the patreon page to join the current list: